A Roadmap for GUI Agents: Where We Are and What Comes Next

TL;DR: In this post, I summarize what I've come to see as the emerging consensus: the field is scaling long-horizon capability by training in controllable environments with verifiable feedback. The next step is pushing safety far enough to enable true asynchronous delegation—handing a task to an agent and walking away.

Why I'm writing this

Over the past year, I've felt GUI Agents shift from a mostly "research topic" into a technology that's increasingly close to real deployment.

You can see it in product directions: some assistants try to operate desktops, click through websites, and complete tasks inside real apps; some phones even treat "AI operating your phone" as a core capability.

As a researcher in this space, I want to clarify the trend I'm seeing:

Where is the field right now—and what are the hardest bottlenecks ahead?

To make the roadmap concrete, I'll keep coming back to two questions:

- Capability: can the agent finish a task end-to-end? (long-horizon planning, error recovery, multi-page / multi-app workflows)

- Deployability: can the agent handle one-way-door decisions and adversarial attacks (payments, transfers, submitting sensitive data)

A lot of discussion focuses on capability. In practice, deployability is often the gate that decides whether these systems ever leave the lab.

S0–S4: Five stages from "talking" to "actually usable"

This is the stage model I use to understand the field.

| Stage | Example | Typical Form | What Improves | Main Bottleneck |

|---|---|---|---|---|

| S0 | General VLMs (e.g., GPT-4o) | Screenshot → Q&A ("What should I click?") | Multimodal understanding | No reliable action execution |

| S1 | Grounding-focused systems (e.g., GTA1-style) | "It can click" (planning + grounding) | Real UI interaction becomes possible | Long-horizon drift |

| S2 (today) | MAI-UI, UI-TARS | Learn-by-doing: scale environments + large-scale RL | Long-horizon learning via on-policy RL | Weak robustness / generalization |

| S3 (early deployment) | Doubao Mobile Assistant | Used in the real world | Governance around irreversible actions | One-way-door safety |

| S4 (mainstream) | N/A | Broad adoption | Reliable, controllable autonomy | Error rate must be extremely low |

S0: The screen Q&A era — "It can explain, but it won't do it for you"

When VLMs first appeared, I almost immediately tried to use them for screen automation. And I failed. Back then, outputs were unreliable—pixel-level localization often missed. My intuition at the time was:

Grounding (mapping language to tiny targets on a screen) might be the hardest part of GUI agents—especially when the vision encoder was still much smaller than language decoder.

In the S0 stage, the workflow I usually use is: When I face a new website or an unfamiliar UI and don't feel like learning it from scratch, I do something simple:

- tell the model what I want to do

- paste a screenshot

- ask: "what should I do next?"

What I get back is usually not pixel coordinates, but directional guidance, such as "the entry is in the top-right". Then I can quickly locate it and click it.

This works because VLMs have strong world knowledge about common UI patterns—and in some cases, they may have even seen the specific site during training.

But the nature of S0 hasn't changed:

It's a Q&A machine. It helps me decide—but it doesn't reliably execute.

And that's not the end goal.

S1: The planning + grounding combo… until it starts drifting

The sign of S1 is that "it can click" becomes usable.

A common system shape emerges naturally:

- a planning model decides what to do next (often using an S0-style VLM)

- a grounding model finds actionable UI elements and executes clicks/typing

Short tasks suddenly look great. Then you try longer ones.

As trajectories get longer, things drift:

- popups appear

- the agent slowly "forgets what it was doing"

- the agent makes bad decision

For a long time, including for me, it was natural to think grounding was the main bottleneck.

That changed when I started watching trajectory-level benchmark traces and doing failure attribution.

When I realized grounding is "mostly solved" (in practice)

Recently I looked closely at trajectories on trajectory-level benchmarks, and tried to split failures into categories:

- was it inaccurate clicking?

- was it a wrong plan / wrong strategy?

- was it something else?

The pattern was striking:

In most failures I observed, the root cause was planning, not low-level action precision.

This also made me more cautious about interpreting grounding benchmarks.

Many grounding benchmarks intentionally make targets extremely tiny—sometimes a fraction of a percent of the screen. Honestly, as a human, I sometimes need time to find those targets too.

So a model getting "only 70%" on a hard grounding benchmark doesn't necessarily mean it can't operate real UIs. Meanwhile, when systems collapse on real trajectories, it's rarely because of "a 12-pixel miss"—it's because the agent fails to keep making stable progress toward a long-horizon goal.

If grounding is no longer the main battlefield, what is?

That's S2.

S2 (where the field is today): Trajectory-level scaling

Once you accept that long-horizon behavior is the bottleneck, the direction is almost inevitable:

If you want an agent to learn long tasks, you need it to run many long trajectories—and learn from the feedback.

So the field is converging on one theme:

Trajectory-level scaling

(put the leverage on scaling long trajectories, not just improving single-step perception)

Within that theme, the most promising path (in my view) is:

Controllable environments

Because they can generate large amounts of interaction data and support unlimited on-policy learning—whether RL or online SFT.

Whether S2 succeeds, in my mind, depends on four essentials.

Four pillars for "learning from the environment"

Task

To learn, you need a lot of tasks.

But tasks in environment learning are not just prompts. A task needs at least:

- a reproducible initial state

- a definition of success/failure

A success definition matters because it is the learning signal. In practice, we often encapsulate this in an evaluator.

Evaluator (the biggest bottleneck, in my view)

An evaluator defines what "success" means. A simple-sounding example that is not simple at all: "set an alarm."

A truly reliable check isn't "the UI looks right." It's something like:

- query the alarm app's SQLite database to confirm a new alarm record was created

To do this, you need:

- evaluation code

- permissions into the app/system state

And it's not something you can assume. In practice, you often don't have privileged access—especially for real-world, closed-source business apps. So the painful reality is:

Scaling tasks + evaluators today still depends heavily on humans writing a lot of evaluation code.

You can use VLM-as-a-judge, but then your learning signal is bounded by model reliability (in a way, it becomes similar to reward-model fragility). So when deterministic evaluation is possible, people still prefer it.

Infra

At this stage, infra stops being "just engineering overhead" and becomes a research ceiling.

If you want hundreds of parallel rollouts, you need to orchestrate hundreds of VMs/emulators:

- reset / snapshots

- failure recovery

- maximizing CPU/GPU utilization

- scheduling, queuing, isolation

Many open-source RL infra stacks focus on optimizing training compute, but aren't deeply optimized for VM-heavy GUI rollout systems. Often the bottleneck isn't gradients—it's environment throughput and orchestration.

Learning signal (data efficiency)

GUI agents are inherently more signal-starved than coding agents.

Coding agents have a complete ecosystem:

- issues as tasks

- unit tests / CI as learning signals

- an IDE/toolchain as a stable environment

Computer-use agents don't get these "free gifts." Often you only have a VM—no issues, no tests.

So data efficiency becomes critical. One question I keep coming back to is:

If a 100-step trajectory is correct for the first 50 steps and wrong for the last 50, can I salvage the "correct half" as useful training signal?

S3: When capability is "good enough," the real gate becomes deployment

Once long-horizon capability is good enough to do real work, the question becomes blunt:

Do we dare to deploy?

In deployment, risk naturally splits into two categories: recoverable (two-way doors) vs irrecoverable (one-way doors).

Two types of risk: two-way doors vs one-way doors

Many failures look scary but are actually two-way doors—you can back out.

If an agent messes up a local Excel/Word document, or even deletes a file, it's bad—but not necessarily irreversible. With strong engineering guardrails (snapshots, rollback, audit logs), you can recover. That's why a natural system shape is to run agents inside VMs / sandboxes: isolation + snapshots can turn many errors into recoverable two-way doors.

But once an agent is truly "online," things change.

Account deletion, mailbox wipes, irreversible submissions, permission revocations—these often can't be fixed by restoring a local VM snapshot. The damage happened in an external system.

That's the true one-way door:

- deleting accounts / permanent state changes

- irreversible messages and form submissions

- higher-stakes one-way doors: payments, transfers, sensitive data leakage

Once money is transferred, losses can be huge—and often unrecoverable.

So to me, S3 is really two problems stacked together:

The engineering problem: make two-way doors recoverable and governable

Industry is best positioned to engineer strong guardrails for recoverable risks:

- run agents in isolation (VM/sandbox)

- snapshots and rollback

- permission and workflow controls: system-level interception, confirmation, and auditing for risky actions

In short: convert as many failure modes as possible into two-way doors.

This can make products usable earlier—even if capability is not perfect.

(For example, there are open-source projects exploring VM-based computer-use setups, such as: https://github.com/trycua/cua)

The research problem: teach models to recognize one-way doors—and stop

One-way doors can't be solved by engineering alone. They require models to know:

which actions are high-risk, irreversible, and should trigger escalation.

Hard research problems here include:

- recognizing one-way-door situations (when to stop, ask, or require elevated permission)

- defending against prompt injection / social engineering in real web environments

- maintaining a conservative policy under persuasion, deception, and phishing-like UIs ("better refuse than do the wrong thing")

This is also why I expect gradual deployment pressure: benchmarks will never cover the full real world. UI variants, login flows, adversarial tactics, and edge cases are endless.

So S3 will inevitably attract criticism:

- "this isn't safe"

- "under attack it could wipe your email"

- "it could leak privacy or make irreversible mistakes"

That criticism is valid. Which means early users will mostly be early adopters.

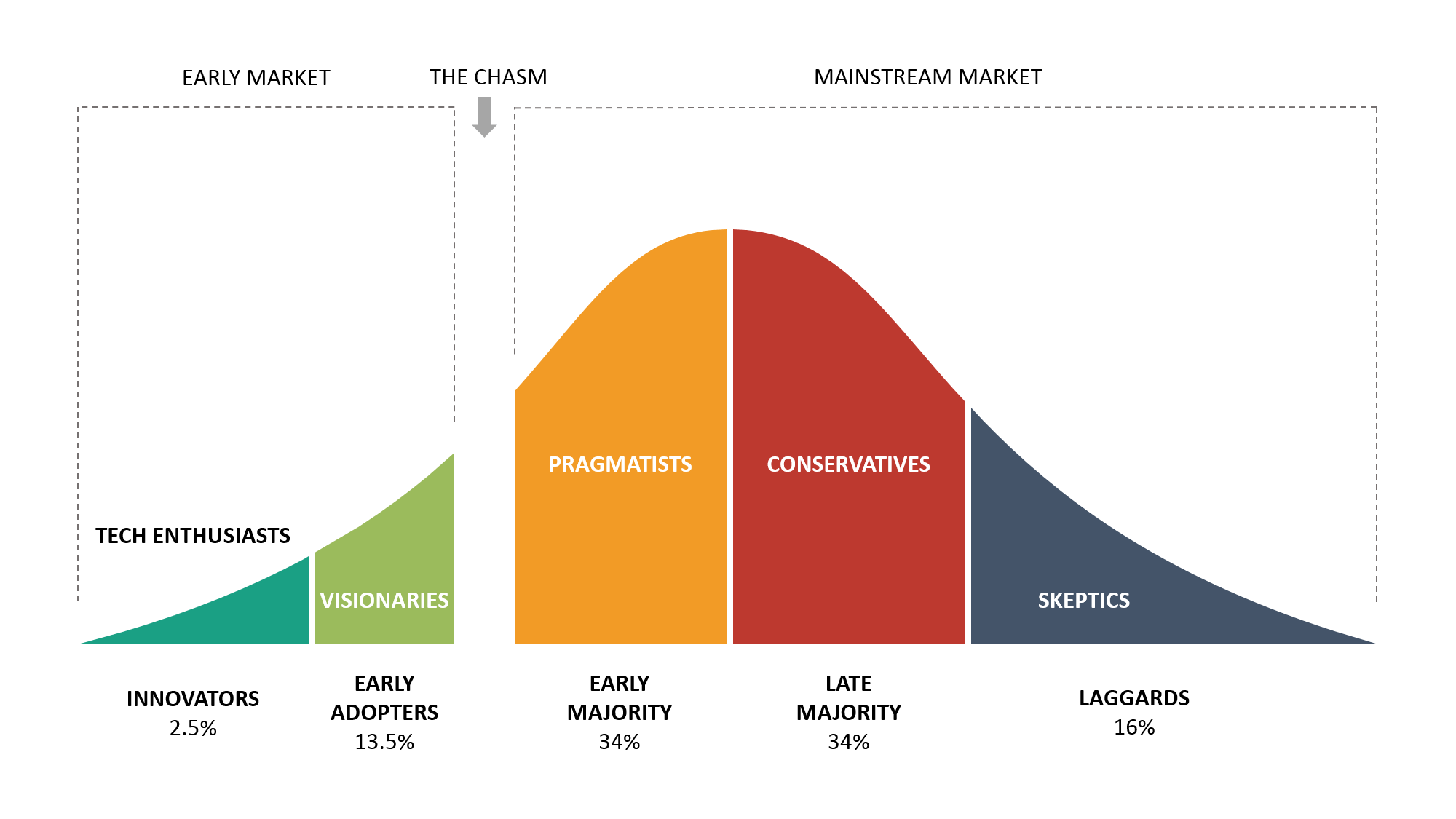

If you view it through the technology adoption lifecycle, S3 feels like the period before crossing the chasm:

some users are impressed by capability, but most are not yet comfortable trusting it.

Crossing that gap won't come from "a few more points." It will require pushing two-way-door engineering guardrails and one-way-door model safety to a reliability threshold that actually earns trust.

S4: Mainstream adoption demands an extremely low error rate

S4 isn't "a bit better." It's a phase change: controllability becomes high enough that ordinary users treat the agent as a default option.

The easiest point to underestimate is this:

In one-way-door settings, 99% is not high.

A 1% failure rate is catastrophic.

If an agent does 100 tasks correctly 99 times but makes one mistake—that mistake could wipe your mailbox or leak bank credentials.

Would you let it do the other 99 tasks?

Probably not.

So the safety bar becomes extremely, extremely high. S4 looks like:

- error rates down to 0.01% or lower

- measured under real distributions, not only curated lab tasks

- coupled with governance mechanisms robust to real-world adversarial pressure

Only then does a computer-use agent move from "a power tool for a few" to "infrastructure for the many."

Closing: This roadmap is a way to locate which mountain you're climbing

If I compress the story into four lines:

- Grounding used to be the first mountain; in practice, we're largely past it.

- The frontier now is trajectory-level scaling: learning to finish long, messy digital work.

- The next mountain is deployability—especially one-way-door safety.

- The endgame is mainstream asynchronous delegation as a default workflow.

If you work in this area, knowing which mountain you're climbing matters. Each stage demands different system capabilities—not just "a smarter model."

And beyond capability, the ultimate bottleneck is trust.

I'm currently looking for a 2026 summer internship focused on scaling RL infrastructure for computer-use agents—feel free to reach out if this aligns with your team.